Infrastructure as Code - An Introduction

An experienced Software engineer with extensive knowledge in Fullstack development ( Java - Angular 8+ ) and Data Science.

Managing IT infrastructure used to be a difficult task. All of the hardware and software required for the programmes to run had to be manually managed and configured by system administrators.

However, things have altered considerably in recent years. Cloud computing has transformed and improved the way businesses plan, create, and sustain their IT infrastructure.

One of the most important aspects of this paradigm is Infrastructure as Code and that's what we'll explore today.

What Is Infrastructure as Code?

Infrastructure as Code (IaC) refers to the process of managing and supplying infrastructure using code rather than manual methods. It is a descriptive approach for managing infrastructure (networks, virtual machines, load balancers, and connection topologies) that employs the same versioning as the DevOps team does for source code.

An IaC model generates the same environment every time it is applied, similar to the principle that the same source code generates the same binary; with configuration files containing your infrastructure specifications created with IaC, making it easier to change and distribute configurations.

Why use IaC?

Now that the "what" is out of the way, lets focus on why do we want to utilize Iac and what is its competitive advantage.

Infrastructure administration and configuration were formerly done manually. Each environment has its own unique configuration that was set up by hand, and that resulted in a number of issues, including:

Cost: You'll need to pay a lot of people to manage and maintain the infrastructure.

Scaling: It takes time since it requires manual configuration of infrastructure operations, and that makes it difficult to handle surges in demand.

Inconsistency: Human infrastructure configuration is prone to errors, there is inconsistency. Errors are unavoidable when multiple individuals configure different environments.

Whereas through IaC, you can achieve the following:

Speed & Simplicity: IaC delivers the full infrastructure with the click of a button by just running a script.

Consistency: All of it is specified as a code, which results in less errors than manual configuration.

Risk minimization: It enables a larger group of individuals to collaborate more effectively on infrastructure configuration and administration.

Cost Minimization: It will allow you to spend less time on routine infrastructure deployment activities and more time on more valuable tasks.

Reusability: It improves reusability with minimal adjustments.

Process Automation: As part of the continuous delivery process, it enables for the automation of the entire process from setup to removal.

Furthermore, describing infrastructure as code entails the following:

Allows infrastructure to be readily linked into version control techniques, making infrastructure modifications trackable and auditable.

Provides the ability to automate infrastructure management to a large extent. As a result of all of this, IaC is being integrated into CI/CD pipelines as a key component of the Software Development Life Cycle (SDLC).

Manual infrastructure provisioning and administration is no longer necessary. As a result, users can simply manage the underlying infrastructure's and configurations' unavoidable config drift while keeping all environments inside the intended configuration.

Challenges of IaC

Each new approach introduces a new set of issues, and IaC is no exception. The same characteristics that make IaC so effective and efficient also provide enterprises with some unique problems. Here's a quick rundown of what they're all about:

Accidental Destruction

In theory, once automated systems are up and running, they don't require ongoing supervision beyond periodic maintenance and replacement. In truth, even automated systems have issues, and these issues can build up over time to become major system-level disasters. It's also known as erosion in technical terms.

Configuration Drift

Automated configuration frequently results in infrastructure parts wandering over time. A repair applied to one server, for example, may not be reproduced across all servers. Although differences aren't necessarily negative, they must be documented and managed.

Lack of Expertise

Creating definition files and testing them to verify that they perform properly necessitates a thorough understanding of the organization's IT architecture. That's a unique set of abilities.

Lack of Proper Design & Planning

Many unknowns surround automation projects, so it's critical to identify and address them early on in the planning process. Continuous testing and phased execution of automation projects can provide sceptics with the information and confidence they need to lead more automation projects in the future.

Error Replication

It's simple to trace human actions and duplicate errors in manual operations. Error replication becomes a difficult problem when automation is involved. System administrators may not be able to correctly replicate error scenarios by analyzing log files, workflows, and other data.

Principles of IaC

1. Easy System Reproducibility

Your IaC approach should make it simple and quick to create and rebuild any part of your IT infrastructure. They shouldn't necessitate a lot of human work or complicated decision-making.

All of the tasks involved must be coded into the definition files, from selecting the software through configuring it. The scripts and tools that handle resource provisioning should have enough data to complete their duties without the need for human intervention.

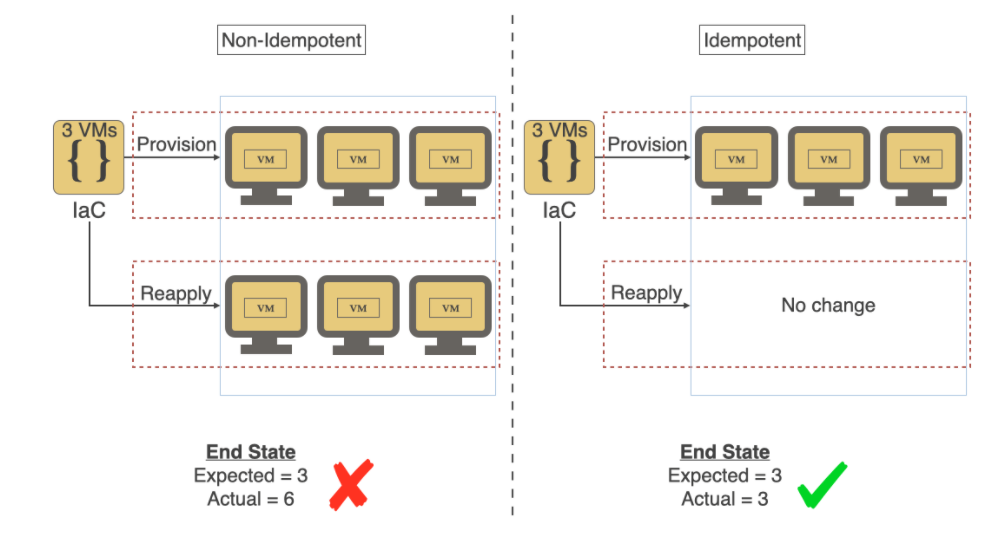

2. Idempotence

Automated systems and their ability to handle difficult tasks are naturally viewed with suspicion by meticulous corporate leaders. As a result, no matter how many times IaC is run, it must maintain consistency. When new servers are installed, for example, they must be equal (or nearly identical) in capacity, performance, and dependability to the existing servers. This way, anytime new infrastructure parts are added, all decisions are automated and preset, from configuration to hosting names.

*Photo Credit: https://shahadarsh.com/ *

*Photo Credit: https://shahadarsh.com/ *

3. Repeatable Processes

System administrators have a natural predilection towards jobs that are easy to understand. When it comes to resource allocation, they like to do it the natural way: assess resource requirements, define best practices, and allocate resources.

Despite its effectiveness, such a procedure is anti-automation. IaC forces system administrators to think in terms of scripts. Their responsibilities must be divided down or grouped into repeatable processes that may be scripted.

4. Disposable Systems

To render hardware reliability insignificant to system operations, IaC relies heavily on reliable and robust software. Organizations must allow hardware failures to impact their businesses in the cloud era, since the underlying hardware may not always be trustworthy. As a result, software-level resource provisioning guarantees that in the event of a hardware breakdown, an alternate hardware allocation is quickly assigned to ensure that IT operations are not disrupted.

At the heart of IaC is fluid infrastructure that can be produced, destroyed, resized, transferred, and replaced. It should be able to seamlessly handle infrastructure changes such as resizing and expansions.

5. Ever-evolving Design

The IT infrastructure design is always changing to meet the organization's changing needs. Because infrastructure modifications are costly, businesses aim to keep them to a minimum by rigorously anticipating future requirements and designing systems accordingly. Future adjustments will be considerably more difficult and costly as a result of these unnecessarily complex designs.

This challenge is addressed by IaC-driven cloud infrastructure, which simplifies change management. While present systems are designed to fulfil current needs, future modifications must be simple to adopt. The best way to ensure that change management is simple and quick is to make regular changes so that all stakeholders are aware of the usual concerns and can develop scripts to successfully address them.

6. Self-Documentation

The teams are constantly striving to ensure their paperwork stay relevant, useful, and accurate. Someone may develop a detailed paper for a new procedure, but it is uncommon for such documents to be maintained up to date as changes and adjustments are made to the way things are done. In addition, holes in records occur on a frequent basis. Several people come up with their own shortcuts and tweaks. Some people build their scripts to make the process go more smoothly.

Despite the fact that documentation is frequently employed to maintain continuity, conventions, and even legal enforcement, it is an exaggerated portrayal of what occurs in reality. The scripts, definition files, and resources that implement the policy with infrastructure as code encapsulate the stages for carrying out a process. Only a small amount of additional documentation is required to get people started. The current documentation should be kept near to the idea that it records so that it is readily available when individuals make adjustments.

GitOps & IoC

GitOps is an operational framework that integrates DevOps best practices for application development to infrastructure automation, such as version control, collaboration, compliance, and CI/CD tooling. While the software development lifecycle has become increasingly automated, infrastructure continues to be a primarily human operation requiring specialized teams.

With the increasing demands on today's infrastructure, infrastructure automation has become increasingly important. Modern infrastructure must be able to handle cloud resources effectively in order to support continuous deployments.

Another approach to implement IaC is to use GitOps, which extends IaC and provides a procedure (Pull Request Process) for applying changes to Production or any other environment. It could also feature a control loop that checks that the actual state of the infrastructure matches the desired state on a routine basis. It will, for example, ensure that any modifications made directly to infrastructure revert to the desired state as defined by source control.

A real-life Example

A key restriction in software development is that the environment in which newly produced software code is tested must exactly match the actual environment in which such code will be deployed. The only method to ensure that new code does not conflict with current code definitions is to generate errors or conflicts that could endanger the entire system.

In the past, software delivery followed a pattern like this

A System Administrator would build up a physical server and install the operating system, including all necessary service packs and tuning, to match the status of the production environment's main running live machine.

The support database would then be handled by a Database Administrator, and the system would be handed over to a testing team.

The code/program would be delivered to the test machine by the developer, and the test team would execute many operational and compliance tests.

You can deploy the updated code to the live, operational environment once it has completed the full procedure. In many circumstances, the new code will not function properly, necessitating more troubleshooting and rework.

A live environment clone made with the same IaC as the live environment guarantees that if it works in the cloned environment, it will also work in the live environment.

Consider a software delivery pipeline that includes DEV, UAT, and Production environments. Given that those early environments are crucial for testing the quality and production readiness of a software build version, there appears to be no utility in having a DEV and UAT environment that isn't an exact mirror of the prod environment.

Virtualization made it possible to speed up this process, particularly when it came to constructing and maintaining a test server that would mimic the live environment. However, because the procedure was manual, a human would be required to develop and update the machine on a regular basis.

These processes got even more "AGILE" after the introduction of DevOps. Human intervention is replaced by automation in the server virtualization and testing phases, increasing productivity and efficiency.

It is now attainable for the developer to do all duties by himself

The developer creates the application code and configuration management-related instructions that will cause the virtualized environment, as well as other environments like the database, appliances, testing tools, delivery tools, and others, to take action.

The configuration management instructions will create a new virtual test environment with an application server and database instance that exactly mirrors the live operational environment structure, both in terms of service packs and versioning, as well as live data that is transferred to such virtual test environment, upon the delivery of new code. (This is the part of the process where Infrastructure as Code is used.)

Then, using a set of tools, the essential compliance checks, as well as error detection and resolution, will be carried out. After that, the updated code is ready to be deployed in a live IT environment.

Technologies & Tools

AWS CloudFormation, Red Hat Ansible, Chef, Puppet, SnowFlake, and Terraform are examples of infrastructure-as-code tools. Some tools employ a domain-specific language (DSL), while others use YAML or JSON as a standard template format.

When choosing a tool, companies should think about where they want to deploy it. AWS CloudFormation, for example, is designed to provision and manage infrastructure on AWS and integrates with other AWS services. Chef, on the other hand, works with on-premises servers as well as a variety of cloud provider infrastructure-as-a-service options.

Hope you enjoyed reading this blog as much as I enjoyed writing it. If you have any comments or suggestions, please feel free to reach out ❤